Abstract

Andragogy, the art and science of helping adults learn, gained popularity in the 1970s, often based on Malcolm Knowles (1970, 1984) assumptions regarding adults as learners. This non-neuropsychological learning theory posits adults see themselves as self-directed learners, can call on their prior experience as a resource for learning, are motivated by the desire to improve in a social role and therefore are more problem rather than subject focused. Adults are more likely to engage when they are intrinsically motivated to learn. Although these assumptions may not hold across all adults at all times, it is a valuable framework for educators to use in determining approaches to teaching adults and addressing issues that may arise in self-direction, prior experience, and motivation.

Chapter Learning Objectives

- Compare and contrast andragogy, pedagogy and heutagogy

- Describe approaches to guiding learners to higher levels of self-determined learning

- Explain how prior experience can support or impede adult learning

- Apply approaches to enhance motivation and readiness to learn

Dr. Adrian Williams is a clinician who is engaging in a formal clinical teaching role for the first time. After three years of full-time practice as a specialist, they are excited to be assigned three trainees. Two of these trainees have limited exposure to the specialty whereas the third, Chris Erickson, has a parent who practices in this specialty. In preparing for this new role, Adrian enlists the help of a mentor. The mentor advises Adrian to consider using adult learning theories to guide their teaching decisions and create learning environments that will support the trainees’ professional growth. The mentor reminds Adrian that in addition to clinical competence, trainees benefit from developing skills for lifelong learning.

- What are the underlying assumptions of the pedagogical, andragogical, or heutagogical approach to teaching?

- What are the roles of the educator and learner in adult learning?

Adrian is reflecting on their first week of clinical teaching with three trainees. Each one has learning strengths and challenges. In considering the learning needs of individual trainees, they realize that although each has similar foundational preparation, their clinical learning needs are quite diverse, as are their approaches to life-long learning. Adrian reviews the assumptions they had about adult learners and applies it to new knowledge regarding each trainee to further differentiate instruction in the coming weeks.

- How do you as an educator decide which approach is best in each situation?

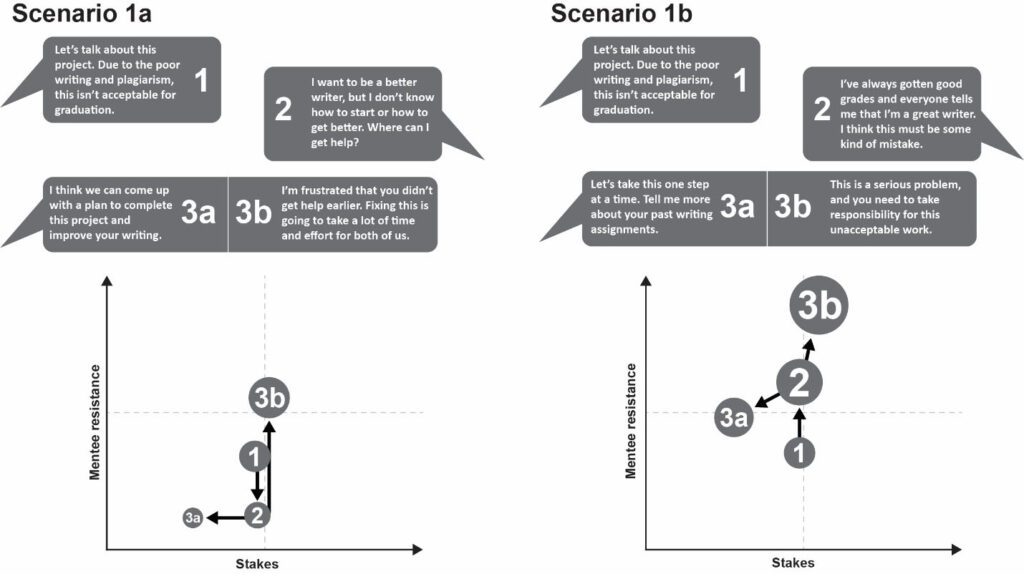

The first trainee is Tyler Miller. Tyler’s last prior rotation was in a related specialty. They have shown progress over the past week and express interest in learning more about this specialty, even though it is not one they are planning to pursue. Tyler has demonstrated clinical abilities through thorough documentation of patient care. Tyler is initially resistant to feedback, typically showing resistance in the moment but does incorporate changes into their clinical performance. When given an unfamiliar situation, Tyler will find the evidence to improve their knowledge base but rarely interacts with others on the team. Tyler is sometimes uncomfortable in communicating with patients, particularly when other team members are in the room. Most concerning is Tyler’s approach to presenting patient cases in the team. They appear anxious and hesitant to answer questions. Adrian shares their observations during an end-of-week meeting, and Tyler discloses that they had several negative experiences with the rotation leader during a previous rotation and was unable to find anyone to help them navigate the situation. Tyler states, “what helped me get by was to just keep my head down.”

- How can Adrian use Tyler’s prior clinical knowledge to enhance their learning?

- How might Tyler’s experiences in previous rotations be impacting their learning?

- What might Adrian do to establish a learning environment where Tyler can move forward in team communication?

The second trainee is Shane Smith. Shane has strong interpersonal skills and easy rapport with patients and staff alike. Their assessment skills are somewhat lacking, and they are slow to respond to feedback. It has become noticeable that Shane does not take much initiative in pursuing learning beyond clinic hours, preferring to socialize with staff. For example, on Wednesday, Shane was tasked with investigating a particularly complex diagnosis of a patient that the team encountered. Shane presented a cursory review of the patient’s condition and care during the next day’s teaching rounds. Later, Adrian discovers that Shane shared with peers that they didn’t know where to find additional research evidence regarding the case and “besides this isn’t something I will see in my future practice.” Adrian contemplates their response to this student who does not appear motivated to learn.

- Should Adrian impose consequences for not doing more outside learning?

- What might Adrian investigate relative to the statement that “this isn’t something I will see in my future practice?”

- Which education practices might Adrian employ to support greater motivation for self-directed learning?

The third trainee is Chris Erickson, whose parent practices in this specialty. Chris has significantly greater knowledge in the specialty, beyond what would be expected. They are excelling in the rotation; things that are challenging to other students seem to come naturally. Chris expresses that this rotation is of particular interest, as it is a specialty they would like to pursue. During this first week, Chris has responded positively to feedback, setting learning goals to improve current performance and asking for help when needed. With encouragement, Chris is beginning to ask patient care questions for which there is no easy answer but is not taking the initiative to address these more complex problems. Adrian senses that Chris may be ready for a greater challenge and guided into higher levels of self-directed learning.

- What observations has Adrian made that indicate Chris may be ready for greater learning independence?

- What self-directed learning skills should Adrian teach or reinforce to help Chris be more independent in lifelong learning?

- What type of learning experiences might benefit Chris and why?

It is nearing the end of the rotation, and each learner has made progress in both their clinical and learning skills. Adrian reflects on how the application of adult learning theories supported the learning of these diverse trainees.

Discussion

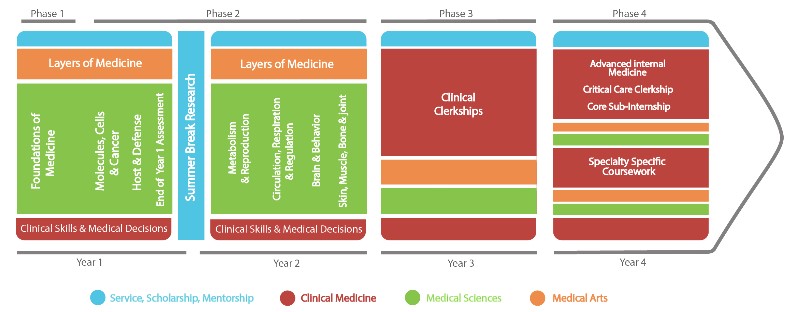

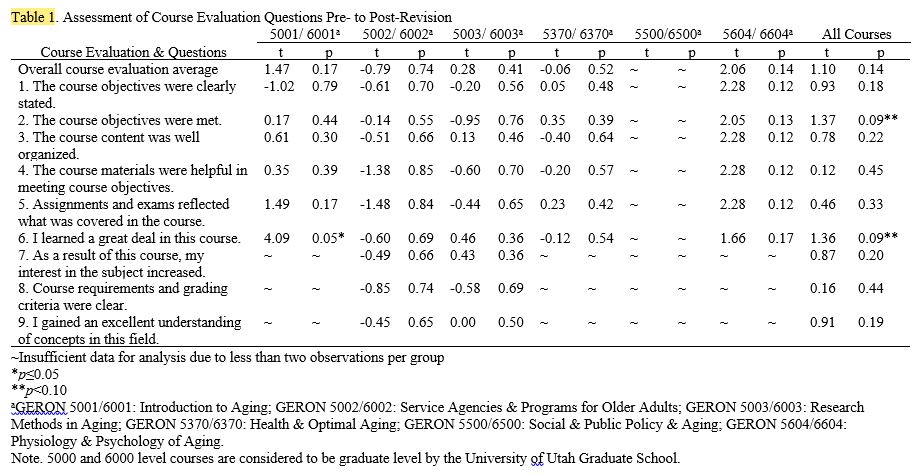

When educators approach teaching decisions, it is important to consider the context in which learning takes place, the nature of the content to be learned, and the characteristics of the learners (Pratt, 2016). Clinical teaching is challenging, as education occurs within the context of providing high-quality patient care while attending to learner needs. Among the learning goals Dr. Williams desires for their trainees is instilling values and skills pertaining to lifelong learning. Gaining an understanding of broad approaches to teaching is to consider a continuum of pedagogical frameworks that vary in goals of instruction, the role of the educator, and the degree of learner self-direction.

Assumptions underlying pedagogical frameworks

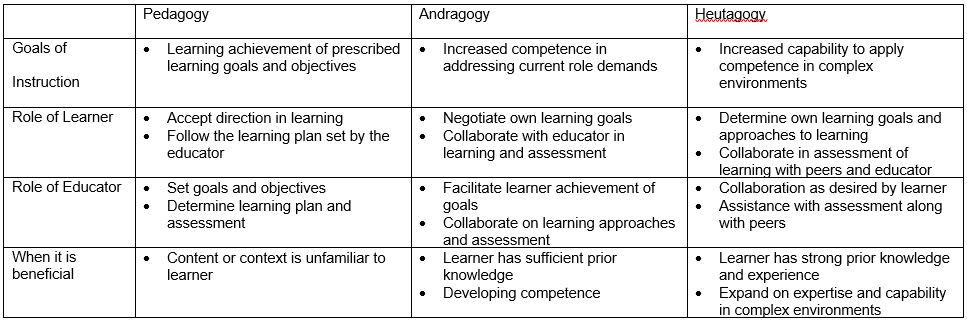

Pedagogy is historically defined as the art and science of teaching, though in contemporary writing, it is associated with teaching children. Andragogy is defined as the art and science of facilitating adult learning (Knowles, 1970). Heutagogy is the art and science of teaching adults blended with complexity theory (Hase & Kenyon, 2007). There are situations in which each approach is appropriate to use with adult learners.

Pedagogy is characterized by a learning environment where the teacher leads the learning experience, and students are in a dependent position. Students are primed to learn what they are told they need to learn in order to progress within a formal academic setting. Although this is often considered less appropriate for adult students, there are situations in which an educator may select to exercise more control. Machynska and Boika (2020) suggest that when learner’s prior experience is not sufficient (such as when large amounts of new information become available) or when prior knowledge makes it difficult for learners to accommodate new information, a more directive approach may initially be warranted.

The idea that the art and science of teaching adults (andragogy) is different from teaching children gained in popularity in the early 1970s. (Merriam & Baumgartner, 2020). The most prominent of the theories and frameworks was introduced by Knowles (1970) as a set of assumptions about adult learners that could be used to derive best practices in adult education. Initially, there were four assumptions in the framework that adults 1) see themselves as self-directed learners, 2) use their life experiences as a resource for learning, 3) become ready to learn based on developmental tasks related to their social roles, and 4) they are more problem-focused with a desire for immediate application of learning (Merriam & Baumgartner, 2020, Wang, 2017). Knowles (1984) later added two assumptions that internal motivation was more influential in learning progress and that adults prefer to know why they need to learn something. Educators can use these assumptions to inform their teaching practice as they assess the needs of their learners.

Andragogy is not without its critics. Each assumption can be challenged in terms of universality; not all adults are ready to be self-directed learners. Prior experience may also create challenges for learning and require a certain amount of unlearning in the process. Adults may choose to learn for the joy of learning itself rather than to solve an immediate problem. Additionally, andragogy neglects to address the social context of learning, focusing primarily on individual development. The goal of andragogy focuses on developing competence in one’s social role (Merriam & Baumgartner, 2020). However, developing competence that can be reproduced in familiar situations is not enough for practice in highly complex health care systems characterized by high levels of uncertainty. Moving from andragogy to heutagogy offers a more advanced level of preparation.

Heutagogy proposes an approach to teaching that assists learners in moving beyond competence to capability through self-determined learning (Hase & Kenyon, 2007). The goal of heutagogy is to equip learners with the skills to set their own learning goals, reflect on their learning processes and goal attainment, and utilize double-loop learning processes to fix problems and challenge their underlying assumptions about how to approach the problem (Abraham & Komattil, 2017). Responsibility for learning transfers to the learner, and opportunities that arise within the complex context of clinical practice serve as catalysts for learning. Whereas in andragogy the educator continues to be significantly involved in guiding the learning process, the educator in a heutagogy serves more as a coach, providing feedback and facilitating learner reflection on both gains and process.

Role of the Educator

The role of the educator, according to adult learning theory, is characterized as facilitator or helper. This begins with the educator considering their relationship to the learner as one not tasked with transmitting knowledge but supporting the process of learning. To be successful, the educator and learner form an alliance to negotiate learning goals and processes. It is important that the educator develops a trusting relationship with the learner through empathy, genuineness, and acceptance. The facilitator is tasked with helping the learner carry out their own goal-directed learning process, negotiating learning goals in relation to the curriculum in an academic setting, providing encouragement and learning guidance, connection to resources, and assisting in evaluation of learning outcomes (Merriam & Baumgartner, 2020).

Educators can refer to the assumptions made about adult learners to select approaches based on trainee strengths and areas for growth. Using adult learning theory assumptions to underpin our decisions, we will further explore how to create learning environments in which every learner can thrive through self-directedness, the role of prior experience, and motivation/readiness to learn.

Prior experience as a resource for learning

The benefit of prior experience as a resource for learning is one of the underlying assumptions of andragogy. Adults acquire knowledge through life experiences, however not all adults have experiences that connect to current learning tasks, or those experiences may present a barrier through misconceptions or negative associations (Merriam & Baumgartner, 2020). Each individual’s knowledge base is uniquely built by through the construction of schemas or “patterns of thought… that organize categories of information or actions and (define) the relationships among them” (van Merrienboer, 2016, p. 15). The function of schemas is to combine previously separate elements into a single element, thus benefiting limited working memory when recall and manipulation of information is required. Learning is a result of consciously constructing and elaborating on these schemas when new information or new connections are introduced. Educators can leverage the power of prior learning by asking trainees to retrieve related knowledge prior to introducing a new topic, relate new knowledge to personal experience, and encourage elaboration as a way to expand and strengthen these schemas (van Merrienboer, 2016).

In addition to knowledge in the field, a learner’s ability to function as part of the health care team can be impacted by positive and negative interpersonal or social experiences related to learning. Past life traumas outside or within a learning environment can manifest in suboptimal learning behaviors. For example, it is unfortunate that humiliation, such as questioning that is perceived as overly harsh, as a method to motivate learners still exists within health professions education. This can have a lingering detrimental impact on learning mediated by a loss of confidence and professional satisfaction (Nagoshi, Hahn & Littles, 2019), as we see manifested in our learner’s reluctance to interact with the team.

Educators can use the principles of trauma-informed care to support those who are struggling with past experiences and to set a positive tone for all learners. These principles include creating spaces where both physical and psychological safety exist, being transparent in teaching, promoting peer support, collaboration, and empowerment to encourage and motivate all students (Brown, et al., 2021). Educators can role model team communications that accept not knowing and mistakes as opportunities for growth, share personal stories relevant to the situation, and develop relationships with each learner (Brown, et al., 2021).

Struggling learners often benefit from debriefing of difficult experiences. One guideline for debriefing comes from the use of peak-end rule related to forming memories of past experiences. The peak-end rule states that memories are framed and based mainly on the peak intensities and conclusion of the experience (Cockburn et al., 2008). In this context, the instructor can utilize reflective thinking with the student to analyze and frame the peaks and conclusion of the clinical experience. Questions such as what was learned or gained can help reframe the experience. The instructor can also guide the student to apply reflection to past negative experiences: to reframe the memory of the experience and prepare for future experiences.

When working with trainees and indeed anyone who is learning, incorporating the learner’s prior experiences has significant benefits through facilitating the acquisition of new knowledge and skills, as well as addressing any gaps in knowledge or negative experiences in prior educational settings. Attending to this aspect of adult learning theory demonstrates respect and is an important component for developing life-long learning skills.

Supporting Intrinsic Motivation

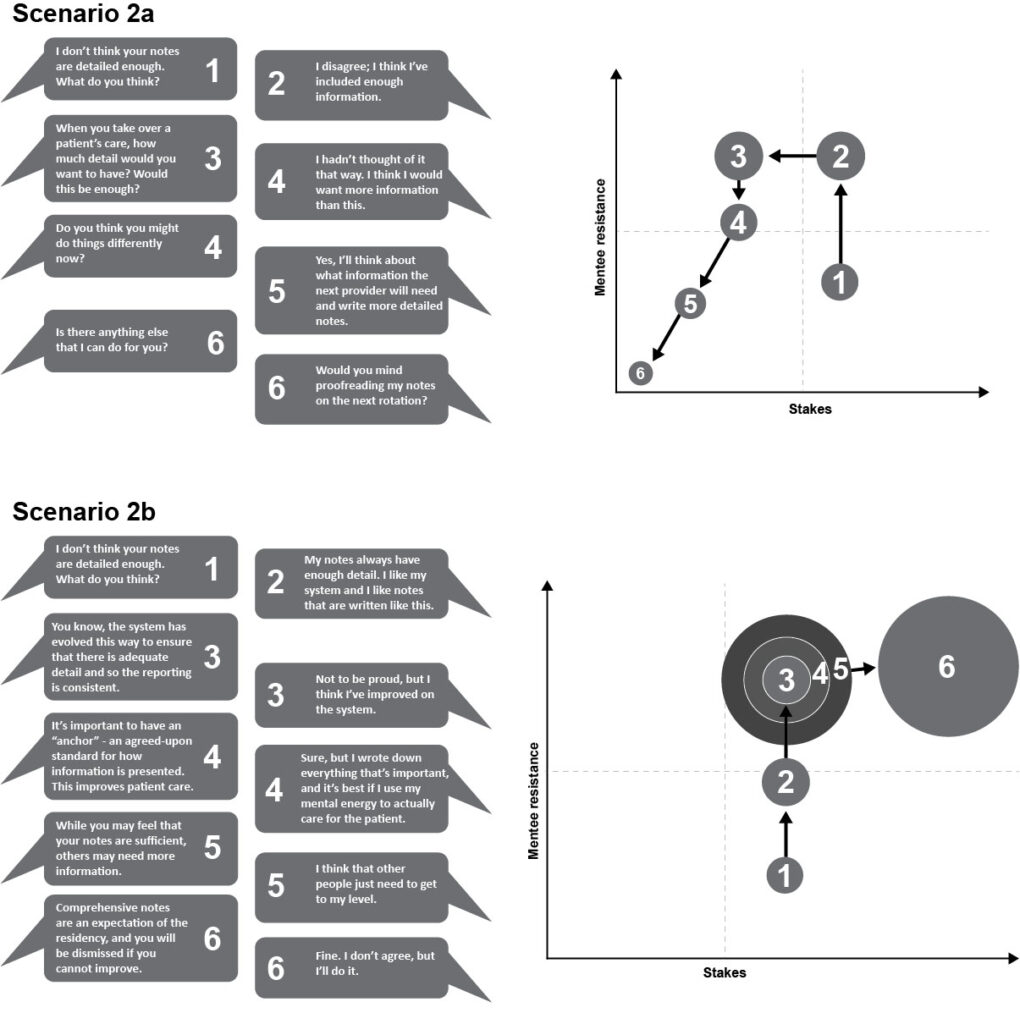

Another characteristic found in andragogy is that adults learn best when they are intrinsically motivated, e.g. a desire to learn based on interest, enjoyment, and inherent satisfaction (Ryan & Deci, 2020). However, this natural tendency can be supported or thwarted by teachers, peers, organizations, and learning environments. Our learners may not demonstrate this intrinsic motivation, which often appears as the trainee not being serious about the work or engaging in self-directed learning. When intrinsic motivation is lacking, there is often an appeal to various extrinsic motivators. Extrinsic motivation is nuanced, ranging from highly externalized motivators such as rewards and punishments for compliance to increasingly autonomous extrinsic motivation that is self-selected based on values and consistency with self or professional identity (Ryan & Deci, 2020). Although adult learners do respond to extrinsic motivation (grades, acceptance by peers and educators, sense of duty), intrinsic motivation leads to greater learning. Imposing consequences on adult learners may change behavior in the short term, focusing on ways to stimulate intrinsic motivation will lead to long term benefits (Wang & Hansman, 2017). One approach to structuring the environment to promote intrinsic motivation is through self-determination theory. According to this theory, motivation rests on three pillars: autonomy, competence, and relatedness (Ryan & Deci, 2020). Attention to each of these areas can help the educator promote motivation for learning.

According to adult learning theory, motivation in adult learners is tied to the characteristics of readiness to learn, problem-solving orientation, and the need to know why one is being asked to learn (Knowles, 1984). Readiness to learn is driven by a perceived need to better function within chosen social roles (problem-solving and immediacy of application), in this context as a health professional. Given limited exposure to the breadth of practice, it may be necessary for the educator to help the learner envision the connections between current learning and future performance expectations. Another tactic is to help the learner to tap into their individual professional interests to find growth opportunities within the learning environment (Orsini et al., 2015; Thammasitboon, et al., 2016, van der Goot, et al., 2019). The concept of readiness to learn and the need to know why behind what one is being asked to learn can be seen as aspects of autonomy, supporting the learner’s ‘sense of initiative and ownership in one’s actions” (Ryan & Deci, 2020 p. 1). This supports Knowles (1984) assumption that adult perceive themselves as self-directed learners.

In addition to autonomy, motivation is supported through a sense of competence, defined as the experience of effectiveness and mastery (Orsini et al., 2015). Supporting the development of competence includes behaviors such as timely feedback, introducing progressively more complex and challenging situations to manage, and encouraging vicarious learning while providing the supports needed to maintain safety (Orsini et al., 2015; van der Goot et al., 2019). Adult learners are motivated by the need to solve problems, and success in this realm managing increasingly complex or ill-defined problems promotes a justified assessment of growing competence. Facilitating competence is directly related to growing autonomy as more responsibility is transferred to the learner.

Underlying this cycle of growing competence and autonomy is a sense of relatedness. This is demonstrated in educator-learner relationships where each is willing to share academic and professional experiences, give and receive feedback, all within a psychologically safe environment. This is an aspect that is not well described by andragogy but is increasingly recognized as crucial for promoting learner growth. Although being able to be vulnerable, admit mistakes, and welcome feedback are important for learning, the clinical learning environment presents challenges (Dolan, Arnold & Green, 2019). Assessment can be too closely tied to grades or other evaluative documentation rather than focusing on formative assessment for learning. Implicit bias can influence assessments, even when it is criterion based. Educators often do not have enough time to attend to assessment in ways that build trusting relationships. These challenges can be mitigated by adopting a mastery orientation that rewards growth based on feedback and reflection, with time to achievement being flexible. In addition, including learners in designing assessments not only improves relatedness, but supports autonomy and competence as well (Dolan, Arnold, & Green, 2019). Relatedness also encompasses creating communities of practice including peers to connect around professional and scholarly activities (Orsini et al., 2015, Thammastiboon et al., 2016). Sharing a common humanity in the pursuit of quality patient care promotes learning for all involved.

Promoting Self-Direction in Learning

As educators, we want to recognize when our trainees are ready to become more independent in pursuing their learning. Knowles defined self-directed learning as ‘’a process in which individuals take the initiative, with or without the help of others, in diagnosing their learning needs, formulating learning goals, identifying human and material resources for learning, choosing and implementing appropriate learning strategies, and evaluating learning outcomes”. (1975, p. 18). Self-direction lies on a continuum from dependent on others for all aspects of learning to independent, self-determined learners who develop their capability to perform in increasingly complex environments (Hase & Kenyon, 2007). The self-determined learner not only establishes their goals but also reevaluates their goals and changes them according to their needs and level of progression. Learners advance in their readiness for self-directed learning as they expand their prior knowledge and experience as well as discovering their internal motivation for further learning. This provides a fertile field for promoting greater self-direction in learning. The process of self-directed learning also lies on a continuum from linear to interactive. Linear models are a more traditional step-by-step process of progression to reaching goals, involving significant pre-planning. On the other end of the spectrum, the interactive model is driven by opportunities in the learning environment and is inherently more spontaneous, arising from a combination of learners who take responsibility for their learning, processes that encourage learners to take control, and an environment that supports learning (Merriam & Baumgartner, 2020). This interactive model provides many benefits in the clinical setting, including greater adaptability than a linear model. Within an interactive model, the learner seeks experiences within their environment, applies past and new knowledge, and capitalizes on the learning environment’s spontaneity (Merriam et al., 2007). These experiences often occur in clusters or sets of experiences. These clusters eventually form the whole of the experience, as they combine in context with other learning clusters (schemas).

Along the continuum, it is incumbent upon educators to assist learners as needed to set goals, locate resources, participate in learning activities, and assess progress. Encourage learners to ask their own questions. Another important skill is the ability to monitor one’s own process and progress in learning. Learners can be encouraged to move from single-loop learning focusing only on outcome, to a double-loop process where the learner pauses to evaluate their processes and the assumptions that underlie their learning in addition to analyzing outcomes. In essence, the learner is guided to ask themselves why they do what they do, this additional depth of metacognition moves the learner further on the continuum to self-determined learning (Jho & Chae, 2014).

The role of the educator includes constructing processes and environments that are conducive to learner self-direction (Merriam & Baumgartner, 2020). This includes identifying or creating authentic learning opportunities for trainees. For example, Thammasitboon, et al. (2016) describe the implementation of scholarly activities within clinical practice to promote greater self-determination in learning. The trainees were able to select areas of professional interest and pursue a project with educator facilitation with positive results for the learners. This activity promoted the learner’s sense of autonomy and competence, thus enhancing their motivation while learning valuable skills in self-directed learning.

CONCLUSION

Adult learning theory (andragogy and heutagogy) serves as a basis for understanding the needs of our learners. The assumptions of this model include that adults prefer to see themselves as self-directed, want to know the why behind what they are learning, use prior experience for learning, and are motivated intrinsically by a desire to meet their current challenges. Educators can create and sustain learning environments and relationships that support learners in connecting past and current learning with future expectations while modeling and rewarding self-directed and self-determined learning.

References

Abraham RR & Komattil R. (2017). Heutagogic approach to developing capable learners. Medical Teacher, 39(3):295-299. doi: 10.1080/0142159X.2017.1270433.

Brown, T., Berman, S. McDaniel, K., Radford, C., Mehta, P., Potter, J, & Hirsh, DA. (2021). Trauma-Informed Medical Education (TIME): Advancing Curricular Content and Educational Context. Academic Medicine 96(5), 661-667.

Cockburn, A., Quinn, P., Gutwin, C. (2015). Examining the peak-end effects of subjective experience. Proceedings of the 33rd annual ACM conference on human factors in computing systems (pp. 357-366). New York, NY: ACM. https://doi.org/10.1145/2702123.2702139

Dolan, B., Arnold, J. & Green, M.M. (2019). Establishing Trust When Assessing Learners: Barriers and Opportunities. Academic Medicine, 94(12), 1851-1853. doi: 10.1097/ACM.0000000000002982.

Hase, S, & Kenyon, C. (2007). Heutagogy: A Child of Complexity Theory. Complicity: An International Journal of Complexity and Education, 4(1), 111-118.

Jho, M. Y., & Chae, M.-O. (2014). Impact of Self-Directed Learning Ability and Metacognition on Clinical Competence among Nursing Students. The Journal of Korean Academic Society of Nursing Education, 20(4), 513–522. https://doi.org/10.5977/jkasne.2014.20.4.513

Knowles, M. S. (1970). The modern practice of adult education: andragogy versus pedagogy. New York: Association Press.

Knowles, M.S. (1975). Self-directed learning: A guide for learners and teachers. Englewood Cliffs; Prentice Hall/Cambridge.

Knowles, M.S. (1984). The Adult Learner: A Neglected Species (3rd Ed.). Houston, TX: Gulf Publishing.

Machynska, N. & Boiko, H. (2020). Andragogy = The Science of Adult Education: Theoretical Aspects. Journal of Innovation in Psychology, Education and Didactics. 24(1), 25-34.

Merriam, S.B. & Baumgartner, L.M. (2020). Knowles Andragogy and McClusky’s Theory of Margin, in Learning in Adulthood: A Comprehensive Guide. Jossey-Bass, pp.117 – 136.

Merriam, S. B., Caffarella, R. S., & Baumgartner, L. (2007). Learning in adulthood: A comprehensive guide (3rd ed.). Jossey-Bass.

Nagoshi, Y., Hahn, P., & Littles, A. (2019). The Secret in the Care of the Learner, in Contemporary Challenges in Medical Education. Z. Zaidi, E. Rosenberg, and R.J. Beyth, eds. Gainesville: U of Florida. 146-162.

Orsini, C., Evans, P., Binnie, V. Ledezma, P. & Fuentes, F (2015). Encouraging intrinsic motivation in the clinical setting: a teachers’ perspectives from the self-determination theory. European Journal of Dental Education, 20, 102-111. doi: 10.1111/eje.12147

Pratt, D.D. (2016). Five Perspectives on Teaching: A Plurality of the Good, 2nd edition. Dave Smulders and Associates

Ryan RM, Deci EL. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. Am Psychol. 55(1):68-78. doi: 10.1037//0003-066x.55.1.68. PMID: 11392867.

Thammasitboon S, Darby JB, Hair AB, Rose KM, Ward MA, Turner TL, Balmer DF (2016). A theory-informed, process-oriented Resident Scholarship Program. Medical Education Online, 14; 21:31021. doi: 10.3402/meo.v21.31021.

van der Groot WE, Cristancho SM, de Carvalho Filho MA, Jaarsma ADC, Helmich E. (2020). Trainee-environment interactions that stimulate motivation: A rich pictures study. Medical Education; 54(3):242-253. doi: 10.1111/medu.14019.

Van Merrienboer, J. (2016). How People Learn in The Wiley Handbook of Learning Technology, N. Rushby & D.W. Surrey, eds. John Wiley & Sons. Pp. 15-34

Wang, V.C.X. and Hansman, C.A. (2017). Pedagogy and Andragogy in Higher Education, in Theory and Practice of Adult and Higher Education Victor C.X. Wang, editor. Information Age Publishing, Inc. Pp. 87 – 111.

Multiple Choice Questions

After sharing assessment information with your learner and identifying gaps in performance, they work with you to set learning goals and determine processes by which to improve in that area. You both agree that you as the educator will assess their progress and provide additional feedback. This is an example of applying which approach to teaching?

a. Pedagogy

b. Andragogy

c. Heutagogy

d. Synergogy

The correct answer is b. This is an example of andragogy in that the educator is promoting self-direction in setting learning goals and learning processes while retaining a significant role in assessment in a skill that addresses current competencies. In contrast, a pedagogical approach would consist of the educator controlling all aspects of the learning process whereas in heutagogy, the learner would be in control of all aspects of the learning process and the focus would be on applying competencies in a complex environment.

2. Which of the following teaching-learning approaches would best support a self-determined learner in a clinical/experiential setting? Encourage them to …

a. make a detailed self-directed learning plan for your feedback

b. analyze how they reached a particular clinical judgment

c. select a topic to present from a rotation-specific list

d. research the use of a particular therapeutic intervention

The correct answer is b. By encouraging the learner to analyze how they reached a particular clinical judgment you are promoting double-loop learning and self-regulation, both are important for adapting to complex environments. The other approaches, such as providing feedback on a learning plan prior to execution, limiting areas of exploration such as selecting a topic or researching a therapeutic intervention are strongly associated with self-directed learning.

3. Which of the following actions taken by the educator in a clinical/experiential setting is an effective approach to honoring the role of the trainees prior experience in learning?

a. Incorporating your own stories into a clinically-based lecture

b. Asking questions to uncover knowledge gaps.

c. Providing detailed clinical explanations to build cognitive schema

d. Demonstrating the proper way to accomplish a common psychomotor skill

The correct answer is b. By asking questions and uncovering misconceptions, the educator can uncover current knowledge and skills as well as identify any gaps. Incorporating your own stories is a powerful method for teaching but centers the educator’s prior experience. Providing detailed explanations of clinical cases does not help learners activate their own prior learning. Demonstrating a common psychomotor skill that learner has already mastered does not assist learners to expand on their current knowledge.

4. When working with a group of trainees, you notice that they do not appear to be motivated to learn what you identify as an important clinical skill. Which of the following interventions could be used to enhance intrinsic motivation for learning this skill?

a. A test at the end of the week

b. A friendly competition with a reward

c. An Explanation why the skill is important

d. A Reminder that it will look good on a their record

The correct answer is c. Explaining why a particular skill is important to their practice will enhance intrinsic motivation. The use of a test, friendly competition, or the promise that this will look good as they move toward credentialing are all examples of extrinsic motivators.